The Turing Test, visually explained

Can AI really think?

Can AI really think?

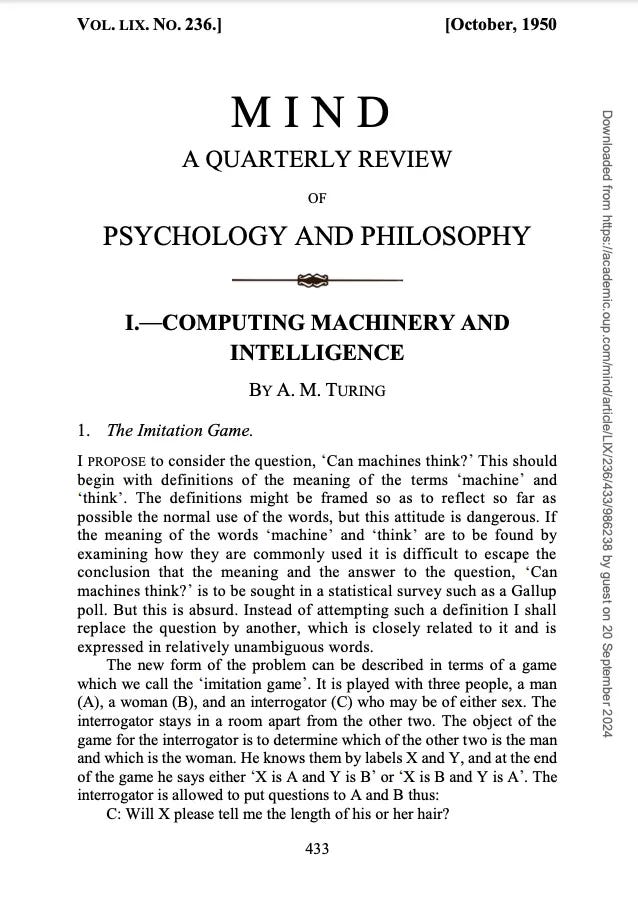

In 1950, mathematician and computer scientist Alan Turing proposed his famous Turing Test to determine whether a machine was exhibiting human-level intelligence.

According to Turing, the question “Can machines think?” was flawed because “thinking” is difficult to define and measure. Instead, he proposed an alternative question that’s easier to observe and quantify: “Can machines imitate human-like intelligence and behaviour?”.

In Turing’s words:

I propose to consider the question, ‘Can machines think?’ This should begin with definitions of the meaning of the terms ‘machine’ and ‘think’. The definitions might be framed so as to reflect so far as possible the normal use of the words, but this attitude is dangerous. If the meaning of the words ‘machine’ and ‘think’ are to be found by examining how they are commonly used it is difficult to escape the conclusion that the meaning and the answer to the question, ‘Can machines think?’ is to be sought in a statistical survey such as a Gallup poll. But this is absurd. Instead of attempting such a definition I shall replace the question by another, which is closely related to it and is expressed in relatively unambiguous words.

What’s the Turing Test?

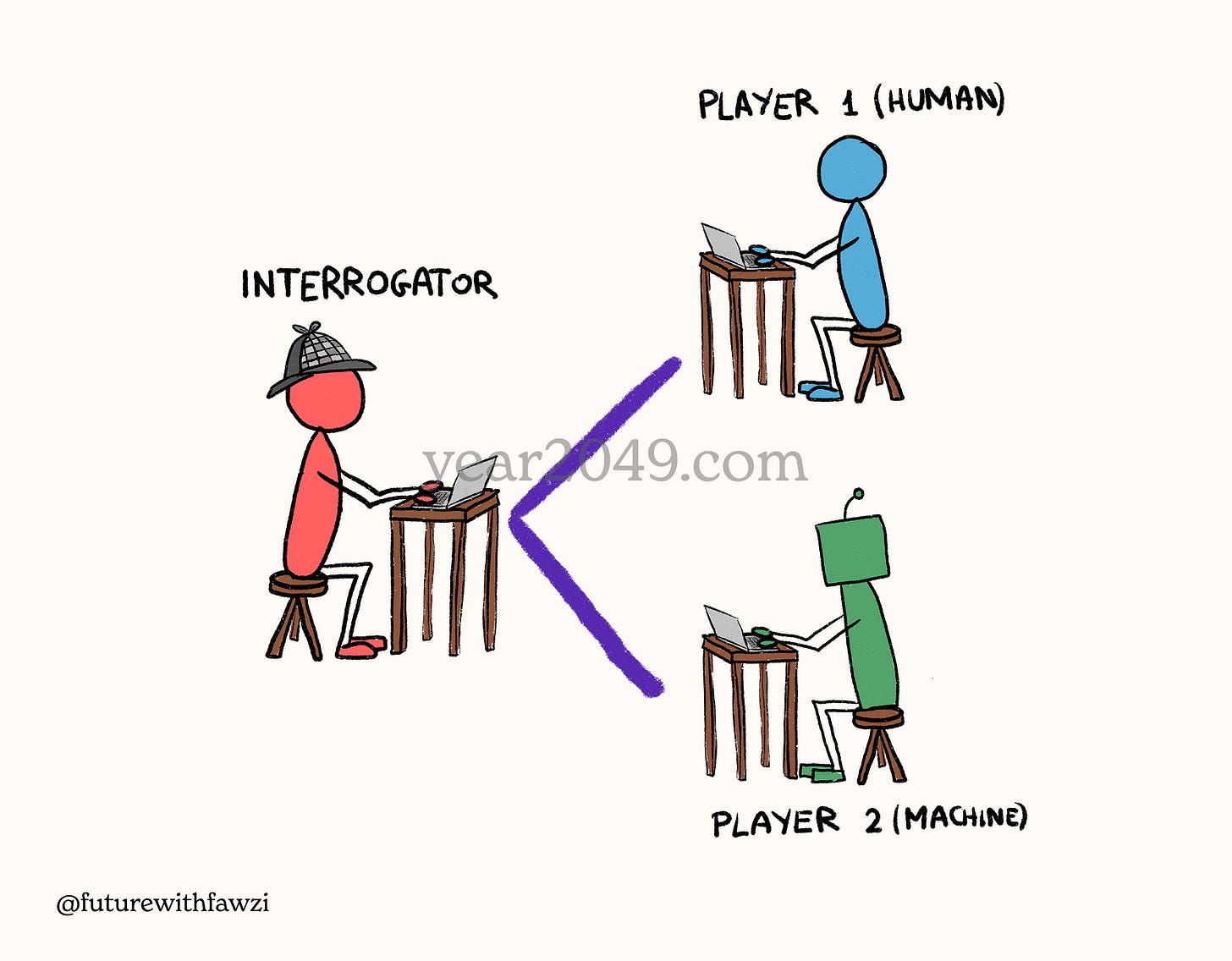

The Turing Test, originally called the Imitation Game, is a game involving a human interrogator and two players: one human and one machine. The interrogator talks to both players through a chat interface and must determine which of them is the human. If the machine fools the interrogator and gets identified as the human, it has passed the Turing Test.

The appeal of the Turing Test is that it’s simple, measurable, and allows us to ask any question without restriction to see how the machine would respond. But the test has many critics who view it as a measure of deception rather than “real” intelligence.

John Searle and the Chinese Room

The philosopher John Searle is one of the Turing Test’s strongest critics. To demonstrate the test’s flaws, he proposed the “Chinese Room” argument.

Imagine a person who doesn’t understand Chinese is locked inside a room with a rulebook explaining Chinese symbols in English. When a piece of Chinese text is passed into the room, the person can follow the rulebook to write and respond back in Chinese. To people outside the room, the person locked inside is a fluent Chinese speaker because their answers are coherent.

But in reality, the person has no idea what the symbols mean or what they’re saying, and is just matching patterns based on the inputs they’re receiving.

According to Searle, passing the Turing Test doesn’t prove real understanding or consciousness because the result depends on whether the human evaluator was fooled by the machine. The simulation of thinking and understanding doesn’t prove their existence.

In a way, Turing and Searle acknowledge the same limitations: “thinking” and “understanding” are hard to measure in the context of “intelligent” machines.

Share this post with someone

Share this post with friends, family, and coworkers.

If you’re not a free subscriber yet, join to get my latest work directly in your inbox.

⏮️ What you may have missed

If you’re new here, here’s what else I published recently:

You can also check out the Year 2049 archive to see everything I’ve published.